Massive language fashions (LLMs) like GPT, LLaMA, and others have taken the world by storm with their exceptional capability to know and generate human-like textual content. Nonetheless, regardless of their spectacular capabilities, the usual methodology of coaching these fashions, referred to as “next-token prediction,” has some inherent limitations.

In next-token prediction, the mannequin is skilled to foretell the subsequent phrase in a sequence given the previous phrases. Whereas this method has confirmed profitable, it may possibly result in fashions that wrestle with long-range dependencies and complicated reasoning duties. Furthermore, the mismatch between the teacher-forcing coaching regime and the autoregressive era course of throughout inference may end up in suboptimal efficiency.

A latest analysis paper by Gloeckle et al. (2024) from Meta AI introduces a novel coaching paradigm known as “multi-token prediction” that goals to deal with these limitations and supercharge giant language fashions. On this weblog submit, we’ll dive deep into the core ideas, technical particulars, and potential implications of this groundbreaking analysis.

What’s Multi-token Prediction?

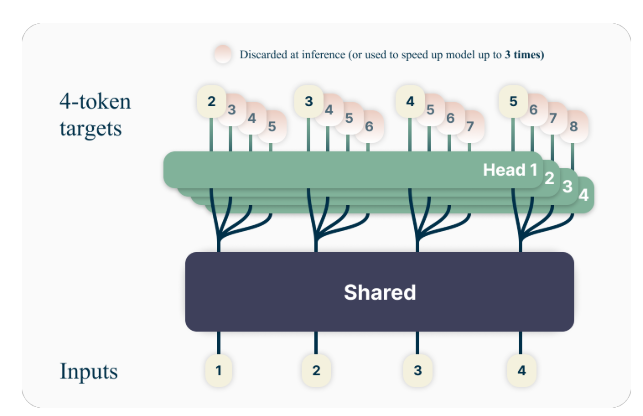

The important thing thought behind multi-token prediction is to coach language fashions to foretell a number of future tokens concurrently, relatively than simply the subsequent token. Particularly, throughout coaching, the mannequin is tasked with predicting the subsequent n tokens at every place within the coaching corpus, utilizing n unbiased output heads working on high of a shared mannequin trunk.

For instance, with a 4-token prediction setup, the mannequin can be skilled to foretell the subsequent 4 tokens without delay, given the previous context. This method encourages the mannequin to seize longer-range dependencies and develop a greater understanding of the general construction and coherence of the textual content.

A Toy Instance

To raised perceive the idea of multi-token prediction, let’s take into account a easy instance. Suppose we have now the next sentence:

“The quick brown fox jumps over the lazy dog.”

In the usual next-token prediction method, the mannequin can be skilled to foretell the subsequent phrase given the previous context. For example, given the context “The quick brown fox jumps over the,” the mannequin can be tasked with predicting the subsequent phrase, “lazy.”

With multi-token prediction, nevertheless, the mannequin can be skilled to foretell a number of future phrases without delay. For instance, if we set n=4, the mannequin can be skilled to foretell the subsequent 4 phrases concurrently. Given the identical context “The quick brown fox jumps over the,” the mannequin can be tasked with predicting the sequence “lazy dog .” (Word the area after “dog” to point the tip of the sentence).

By coaching the mannequin to foretell a number of future tokens without delay, it’s inspired to seize long-range dependencies and develop a greater understanding of the general construction and coherence of the textual content.

Technical Particulars

The authors suggest a easy but efficient structure for implementing multi-token prediction. The mannequin consists of a shared transformer trunk that produces a latent illustration of the enter context, adopted by n unbiased transformer layers (output heads) that predict the respective future tokens.

Throughout coaching, the ahead and backward passes are fastidiously orchestrated to attenuate the GPU reminiscence footprint. The shared trunk computes the latent illustration, after which every output head sequentially performs its ahead and backward go, accumulating gradients on the trunk stage. This method avoids materializing all logit vectors and their gradients concurrently, lowering the height GPU reminiscence utilization from O(nV + d) to O(V + d), the place V is the vocabulary dimension and d is the dimension of the latent illustration.

The Reminiscence-efficient Implementation

One of many challenges in coaching multi-token predictors is lowering their GPU reminiscence utilization. For the reason that vocabulary dimension (V) is often a lot bigger than the dimension of the latent illustration (d), logit vectors change into the GPU reminiscence utilization bottleneck.

To deal with this problem, the authors suggest a memory-efficient implementation that fastidiously adapts the sequence of ahead and backward operations. As an alternative of materializing all logits and their gradients concurrently, the implementation sequentially computes the ahead and backward passes for every unbiased output head, accumulating gradients on the trunk stage.

This method avoids storing all logit vectors and their gradients in reminiscence concurrently, lowering the height GPU reminiscence utilization from O(nV + d) to O(V + d), the place n is the variety of future tokens being predicted.

Benefits of Multi-token Prediction

The analysis paper presents a number of compelling benefits of utilizing multi-token prediction for coaching giant language fashions:

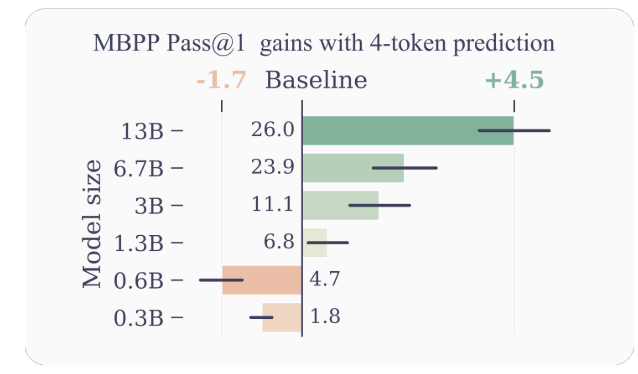

- Improved Pattern Effectivity: By encouraging the mannequin to foretell a number of future tokens without delay, multi-token prediction drives the mannequin in the direction of higher pattern effectivity. The authors show vital enhancements in efficiency on code understanding and era duties, with fashions as much as 13B parameters fixing round 15% extra issues on common.

- Quicker Inference: The extra output heads skilled with multi-token prediction may be leveraged for self-speculative decoding, a variant of speculative decoding that permits for parallel token prediction. This ends in as much as 3x quicker inference occasions throughout a variety of batch sizes, even for giant fashions.

- Selling Lengthy-range Dependencies: Multi-token prediction encourages the mannequin to seize longer-range dependencies and patterns within the knowledge, which is especially useful for duties that require understanding and reasoning over bigger contexts.

- Algorithmic Reasoning: The authors current experiments on artificial duties that show the prevalence of multi-token prediction fashions in growing induction heads and algorithmic reasoning capabilities, particularly for smaller mannequin sizes.

- Coherence and Consistency: By coaching the mannequin to foretell a number of future tokens concurrently, multi-token prediction encourages the event of coherent and constant representations. That is significantly useful for duties that require producing longer, extra coherent textual content, equivalent to storytelling, artistic writing, or producing tutorial manuals.

- Improved Generalization: The authors’ experiments on artificial duties counsel that multi-token prediction fashions exhibit higher generalization capabilities, particularly in out-of-distribution settings. That is probably because of the mannequin’s capability to seize longer-range patterns and dependencies, which might help it extrapolate extra successfully to unseen eventualities.

Examples and Intuitions

To supply extra instinct on why multi-token prediction works so properly, let’s take into account a couple of examples:

- Code Technology: Within the context of code era, predicting a number of tokens concurrently might help the mannequin perceive and generate extra advanced code buildings. For example, when producing a operate definition, predicting simply the subsequent token won’t present sufficient context for the mannequin to generate your complete operate signature accurately. Nonetheless, by predicting a number of tokens without delay, the mannequin can higher seize the dependencies between the operate identify, parameters, and return kind, resulting in extra correct and coherent code era.

- Pure Language Reasoning: Think about a situation the place a language mannequin is tasked with answering a query that requires reasoning over a number of steps or items of data. By predicting a number of tokens concurrently, the mannequin can higher seize the dependencies between the completely different elements of the reasoning course of, resulting in extra coherent and correct responses.

- Lengthy-form Textual content Technology: When producing long-form textual content, equivalent to tales, articles, or experiences, sustaining coherence and consistency over an prolonged interval may be difficult for language fashions skilled with next-token prediction. Multi-token prediction encourages the mannequin to develop representations that seize the general construction and circulation of the textual content, probably resulting in extra coherent and constant long-form generations.

Limitations and Future Instructions

Whereas the outcomes offered within the paper are spectacular, there are a couple of limitations and open questions that warrant additional investigation:

- Optimum Variety of Tokens: The paper explores completely different values of n (the variety of future tokens to foretell) and finds that n=4 works properly for a lot of duties. Nonetheless, the optimum worth of n could rely upon the particular job, dataset, and mannequin dimension. Creating principled strategies for figuring out the optimum n might result in additional efficiency enhancements.

- Vocabulary Dimension and Tokenization: The authors be aware that the optimum vocabulary dimension and tokenization technique for multi-token prediction fashions could differ from these used for next-token prediction fashions. Exploring this facet might result in higher trade-offs between compressed sequence size and computational effectivity.

- Auxiliary Prediction Losses: The authors counsel that their work might spur curiosity in growing novel auxiliary prediction losses for giant language fashions, past the usual next-token prediction. Investigating different auxiliary losses and their combos with multi-token prediction is an thrilling analysis course.

- Theoretical Understanding: Whereas the paper offers some intuitions and empirical proof for the effectiveness of multi-token prediction, a deeper theoretical understanding of why and the way this method works so properly can be useful.

Conclusion

The analysis paper “Better & Faster Large Language Models via Multi-token Prediction” by Gloeckle et al. introduces a novel coaching paradigm that has the potential to considerably enhance the efficiency and capabilities of enormous language fashions. By coaching fashions to foretell a number of future tokens concurrently, multi-token prediction encourages the event of long-range dependencies, algorithmic reasoning skills, and higher pattern effectivity.

The technical implementation proposed by the authors is elegant and computationally environment friendly, making it possible to use this method to large-scale language mannequin coaching. Moreover, the power to leverage self-speculative decoding for quicker inference is a major sensible benefit.

Whereas there are nonetheless open questions and areas for additional exploration, this analysis represents an thrilling step ahead within the area of enormous language fashions. Because the demand for extra succesful and environment friendly language fashions continues to develop, multi-token prediction might change into a key part within the subsequent era of those highly effective AI methods.