Video body interpolation (VFI) is an open downside in generative video analysis. The problem is to generate intermediate frames between two present frames in a video sequence.

Click on to play. The FILM framework, a collaboration between Google and the College of Washington, proposed an efficient body interpolation technique that continues to be fashionable in hobbyist {and professional} spheres. On the left, we are able to see the 2 separate and distinct frames superimposed; within the center, the ‘finish body’; and on the proper, the ultimate synthesis between the frames. Sources: https://film-net.github.io/ and https://arxiv.org/pdf/2202.04901

Broadly talking, this system dates again over a century, and has been utilized in conventional animation since then. In that context, grasp ‘keyframes’ could be generated by a principal animation artist, whereas the work of ‘tweening’ intermediate frames could be carried out as by different staffers, as a extra menial job.

Previous to the rise of generative AI, body interpolation was utilized in tasks comparable to Actual-Time Intermediate Move Estimation (RIFE), Depth-Conscious Video Body Interpolation (DAIN), and Google’s Body Interpolation for Massive Movement (FILM – see above) for functions of accelerating the body price of an present video, or enabling artificially-generated slow-motion results. That is completed by splitting out the prevailing frames of a clip and producing estimated intermediate frames.

VFI can also be used within the growth of higher video codecs, and, extra usually, in optical move-based programs (together with generative programs), that make the most of advance information of coming keyframes to optimize and form the interstitial content material that precedes them.

Finish Frames in Generative Video Programs

Trendy generative programs comparable to Luma and Kling permit customers to specify a begin and an finish body, and might carry out this job by analyzing keypoints within the two photos and estimating a trajectory between the 2 photos.

As we are able to see within the examples under, offering a ‘closing’ keyframe higher permits the generative video system (on this case, Kling) to take care of features comparable to id, even when the outcomes will not be excellent (notably with giant motions).

Click on to play. Kling is one in all a rising variety of video mills, together with Runway and Luma, that permit the person to specify an finish body. Most often, minimal movement will result in essentially the most reasonable and least-flawed outcomes. Supply: https://www.youtube.com/watch?v=8oylqODAaH8

Within the above instance, the individual’s id is constant between the 2 user-provided keyframes, resulting in a comparatively constant video technology.

The place solely the beginning body is offered, the generative programs window of consideration shouldn’t be often giant sufficient to ‘keep in mind’ what the individual appeared like at the beginning of the video. Moderately, the id is more likely to shift a bit of bit with every body, till all resemblance is misplaced. Within the instance under, a beginning picture was uploaded, and the individual’s motion guided by a textual content immediate:

Click on to play. With no finish body, Kling solely has a small group of instantly prior frames to information the technology of the following frames. In circumstances the place any important motion is required, this atrophy of id turns into extreme.

We will see that the actor’s resemblance shouldn’t be resilient to the directions, because the generative system doesn’t know what he would appear like if he was smiling, and he isn’t smiling within the seed picture (the one accessible reference).

The vast majority of viral generative clips are rigorously curated to de-emphasize these shortcomings. Nonetheless, the progress of temporally constant generative video programs could depend upon new developments from the analysis sector in regard to border interpolation, because the solely doable different is a dependence on conventional CGI as a driving, ‘information’ video (and even on this case, consistency of texture and lighting are at the moment tough to realize).

Moreover, the slowly-iterative nature of deriving a brand new body from a small group of latest frames makes it very tough to realize giant and daring motions. It’s because an object that’s shifting quickly throughout a body could transit from one aspect to the opposite within the house of a single body, opposite to the extra gradual actions on which the system is more likely to have been skilled.

Likewise, a big and daring change of pose could lead not solely to id shift, however to vivid non-congruities:

Click on to play. On this instance from Luma, the requested motion doesn’t seem like well-represented within the coaching knowledge.

Framer

This brings us to an attention-grabbing latest paper from China, which claims to have achieved a brand new state-of-the-art in authentic-looking body interpolation – and which is the primary of its form to supply drag-based person interplay.

Framer permits the person to direct movement utilizing an intuitive drag-based interface, although it additionally has an ‘automated’ mode. Supply: https://www.youtube.com/watch?v=4MPGKgn7jRc

Drag-centric functions have grow to be frequent in the literature recently, because the analysis sector struggles to offer instrumentalities for generative system that aren’t primarily based on the pretty crude outcomes obtained by textual content prompts.

The brand new system, titled Framer, cannot solely comply with the user-guided drag, but additionally has a extra typical ‘autopilot’ mode. Apart from typical tweening, the system is able to producing time-lapse simulations, in addition to morphing and novel views of the enter picture.

Interstitial frames generated for a time-lapse simulation in Framer. Supply: https://arxiv.org/pdf/2410.18978

In regard to the manufacturing of novel views, Framer crosses over a bit of into the territory of Neural Radiance Fields (NeRF) – although requiring solely two photos, whereas NeRF usually requires six or extra picture enter views.

In assessments, Framer, which is based on Stability.ai’s Steady Video Diffusion latent diffusion generative video mannequin, was in a position to outperform approximated rival approaches, in a person examine.

On the time of writing, the code is about to be launched at GitHub. Video samples (from which the above photos are derived) can be found on the venture website, and the researchers have additionally launched a YouTube video.

The new paper is titled Framer: Interactive Body Interpolation, and comes from 9 researchers throughout Zhejiang College and the Alibaba-backed Ant Group.

Methodology

Framer makes use of keypoint-based interpolation in both of its two modalities, whereby the enter picture is evaluated for primary topology, and ‘movable’ factors assigned the place obligatory. In impact, these factors are equal to facial landmarks in ID-based programs, however generalize to any floor.

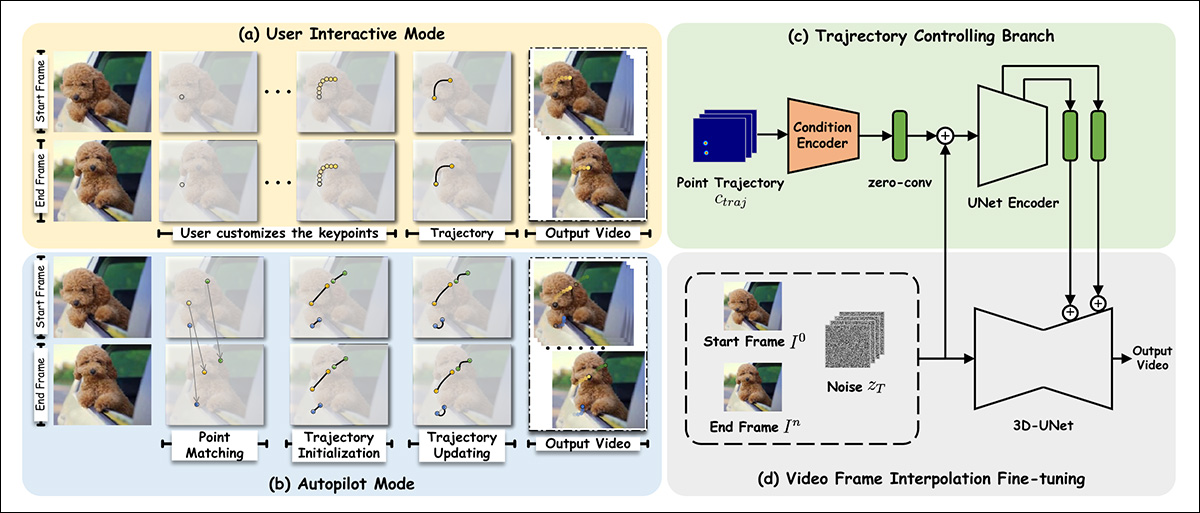

The researchers fine-tuned Steady Video Diffusion (SVD) on the OpenVid-1M dataset, including a further last-frame synthesis functionality. This facilitates a trajectory-control mechanism (high proper in schema picture under) that may consider a path towards the end-frame (or again from it).

Schema for Framer.

Relating to the addition of last-frame conditioning, the authors state:

‘To protect the visible prior of the pre-trained SVD as a lot as doable, we comply with the conditioning paradigm of SVD and inject end-frame circumstances within the latent house and semantic house, respectively.

‘Particularly, we concatenate the VAE-encoded latent characteristic of the primary [frame] with the noisy latent of the primary body, as did in SVD. Moreover, we concatenate the latent characteristic of the final body, zn, with the noisy latent of the tip body, contemplating that the circumstances and the corresponding noisy latents are spatially aligned.

‘As well as, we extract the CLIP picture embedding of the primary and final frames individually and concatenate them for cross-attention characteristic injection.’

For drag-based performance, the trajectory module leverages the Meta Ai-led CoTracker framework, which evaluates profuse doable paths forward. These are slimmed right down to between 1-10 doable trajectories.

The obtained level coordinates are then reworked via a technique impressed by the DragNUWA and DragAnything architectures. This obtains a Gaussian heatmap, which individuates the goal areas for motion.

Subsequently, the info is fed to the conditioning mechanisms of ControlNet, an ancillary conformity system initially designed for Steady Diffusion, and since tailored to different architectures.

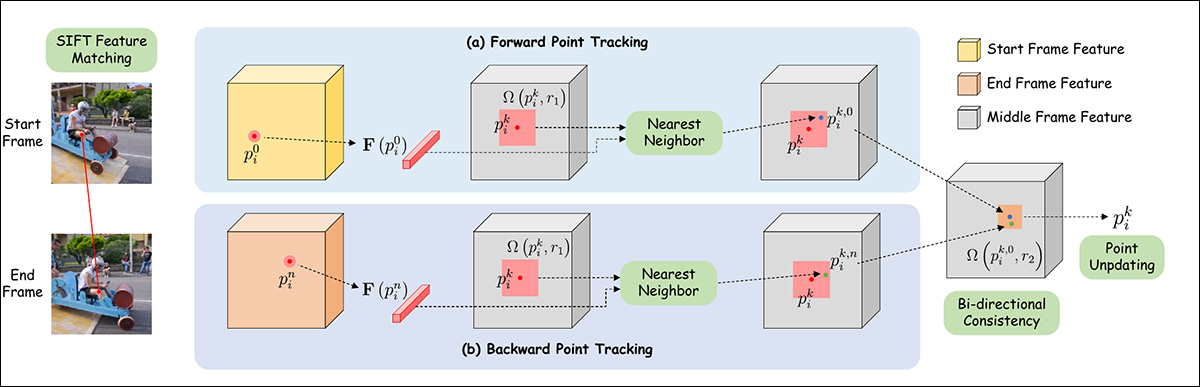

For autopilot mode, characteristic matching is initially completed through SIFT, which interprets a trajectory that may then be handed to an auto-updating mechanism impressed by DragGAN and DragDiffusion.

Schema for level trajectory estimation in Framer.

Knowledge and Checks

For the fine-tuning of Framer, the spatial consideration and residual blocks have been frozen, and solely the temporal consideration layers and residual blocks have been affected.

The mannequin was skilled for 10,000 iterations underneath AdamW, at a studying price of 1e-4, and a batch dimension of 16. Coaching occurred throughout 16 NVIDIA A100 GPUs.

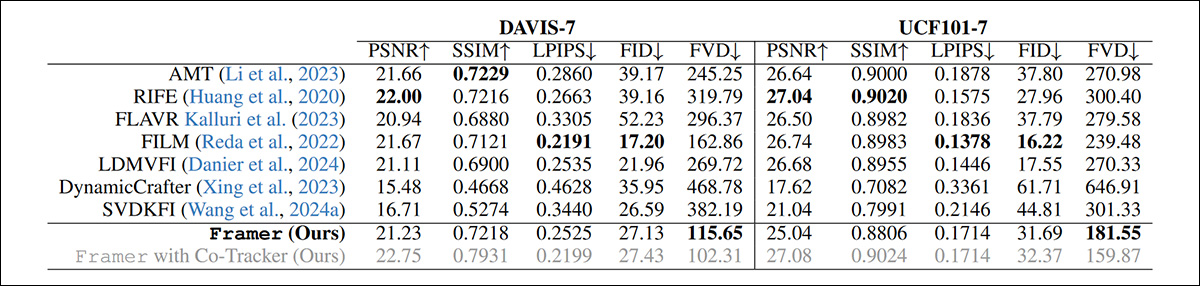

Since prior approaches to the issue don’t provide drag-based modifying, the researchers opted to match Framer’s autopilot mode to the usual performance of older choices.

The frameworks examined for the class of present diffusion-based video technology programs have been LDMVFI; Dynamic Crafter; and SVDKFI. For ‘conventional’ video programs, the rival frameworks have been AMT; RIFE; FLAVR; and the aforementioned FILM.

Along with the person examine, assessments have been performed over the DAVIS and UCF101 datasets.

Qualitative assessments can solely be evaluated by the target colleges of the analysis staff and by person research. Nonetheless, the paper notes, conventional quantitative metrics are largely unsuited to the proposition at hand:

‘[Reconstruction] metrics like PSNR, SSIM, and LPIPS fail to seize the standard of interpolated frames precisely, since they penalize different believable interpolation outcomes that aren’t pixel-aligned with the unique video.

‘Whereas technology metrics comparable to FID provide some enchancment, they nonetheless fall quick as they don’t account for temporal consistency and consider frames in isolation.’

Regardless of this, the researchers performed qualitative assessments with a number of fashionable metrics:

Quantitative outcomes for Framer vs. rival programs.

The authors observe that regardless of having the percentages stacked in opposition to them, Framer nonetheless achieves the most effective FVD rating among the many strategies examined.

Under are the paper’s pattern outcomes for a qualitative comparability:

Qualitative comparability in opposition to former approaches. Please check with the paper for higher decision, in addition to video outcomes at https://www.youtube.com/watch?v=4MPGKgn7jRc.

The authors remark:

‘[Our] technique produces considerably clearer textures and pure movement in comparison with present interpolation strategies. It performs particularly nicely in situations with substantial variations between the enter frames, the place conventional strategies typically fail to interpolate content material precisely.

‘In comparison with different diffusion-based strategies like LDMVFI and SVDKFI, Framer demonstrates superior adaptability to difficult circumstances and presents higher management.’

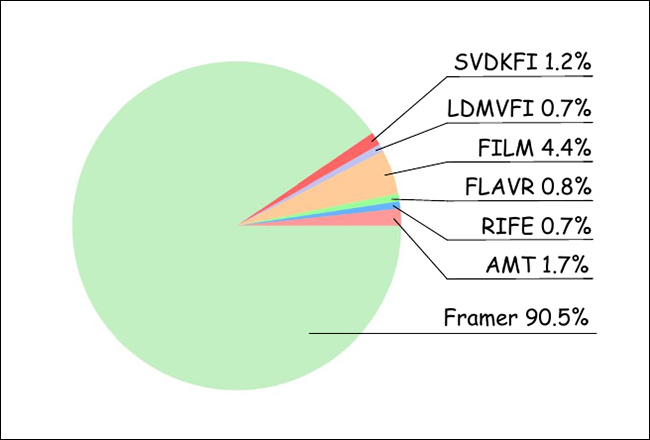

For the person examine, the researchers gathered 20 individuals, who assessed 100 randomly-ordered video outcomes from the varied strategies examined. Thus, 1000 rankings have been obtained, evaluating essentially the most ‘reasonable’ choices:

Outcomes from the person examine.

As might be seen from the graph above, customers overwhelmingly favored outcomes from Framer.

The venture’s accompanying YouTube video outlines among the potential different makes use of for framer, together with morphing and cartoon in-betweening – the place the complete idea started.

Conclusion

It’s arduous to over-emphasize how necessary this problem at the moment is for the duty of AI-based video technology. So far, older options comparable to FILM and the (non-AI) EbSynth have been used, by each beginner {and professional} communities, for tweening between frames; however these options include notable limitations.

Due to the disingenuous curation of official instance movies for brand new T2V frameworks, there’s a extensive public false impression that machine studying programs can precisely infer geometry in movement with out recourse to steerage mechanisms comparable to 3D morphable fashions (3DMMs), or different ancillary approaches, comparable to LoRAs.

To be sincere, tweening itself, even when it may very well be completely executed, solely constitutes a ‘hack’ or cheat upon this downside. Nonetheless, since it’s typically simpler to provide two well-aligned body photos than to impact steerage through text-prompts or the present vary of options, it’s good to see iterative progress on an AI-based model of this older technique.

First printed Tuesday, October 29, 2024