Picture by Editor | Midjourney & Canva

Robin Sharma stated, “Every master was once a beginner. Every pro was once an amateur.” You’ve heard about giant language fashions (LLMs), AI, and Transformer fashions (GPT) making waves within the AI house for some time, and you’re confused about how one can get began. I can guarantee you that everybody you see right now constructing advanced functions was as soon as there.

That’s the reason, on this article, you may be impacted by the data it’s essential to begin constructing LLM apps with Python programming language. That is strictly beginner-friendly, and you may code alongside whereas studying this text.

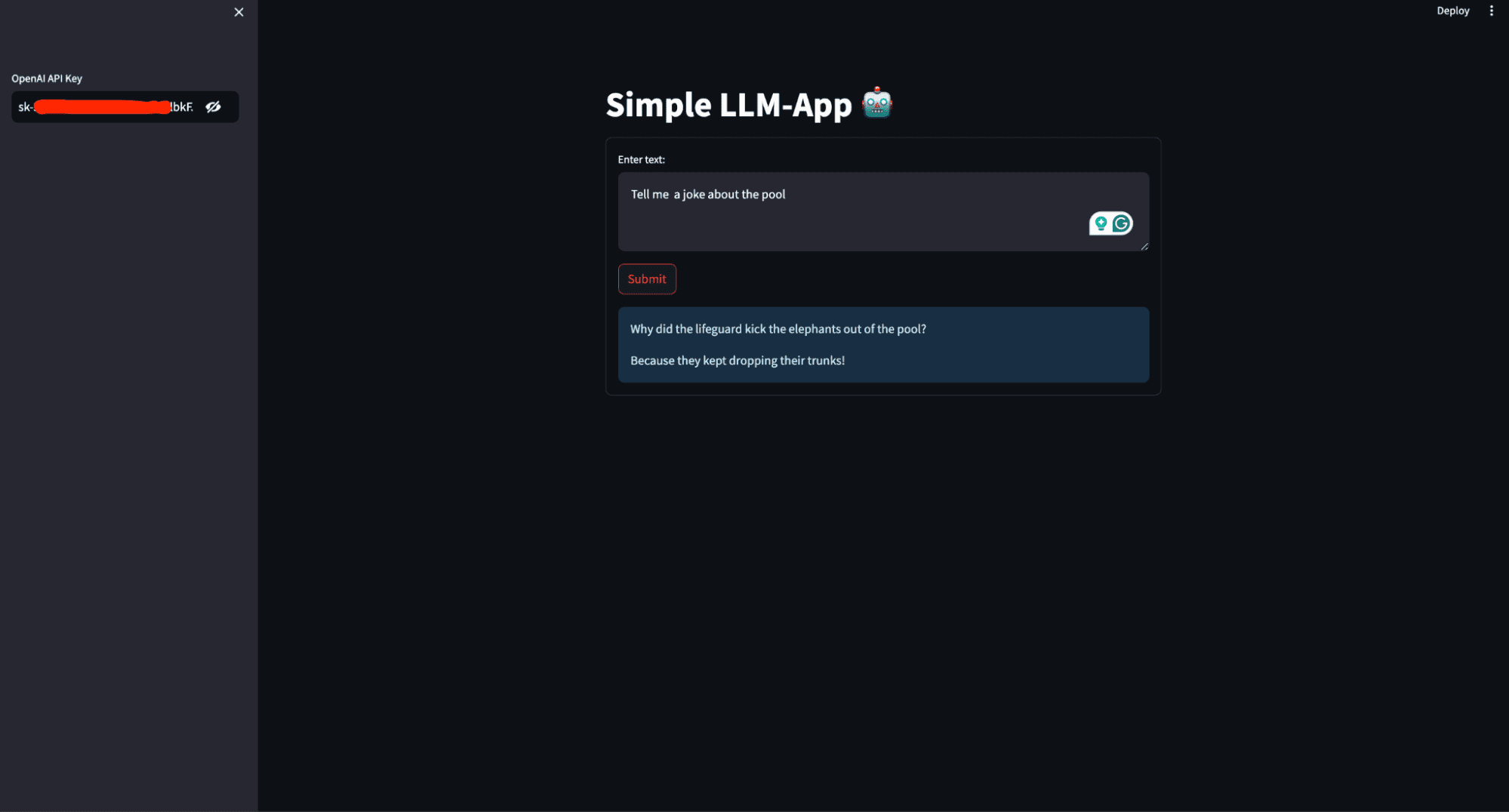

What’s going to you construct on this article? You’ll create a easy AI private assistant that generates a response primarily based on the person’s immediate and deploys it to entry it globally. The picture beneath exhibits what the completed utility seems to be like.

This picture exhibits the person interface of the AI private assistant that shall be constructed on this article

Stipulations

So that you can comply with by with this text, there are some things it’s essential to have on lock. This consists of:

- Python (3.5+), and background writing Python scripts.

- OpenAI: OpenAI is a analysis group and know-how firm that goals to make sure synthetic normal intelligence (AGI) advantages all of humanity. One in every of its key contributions is the event of superior LLMs reminiscent of GPT-3 and GPT-4. These fashions can perceive and generate human-like textual content, making them highly effective instruments for varied functions like chatbots, content material creation, and extra.

Enroll for OpenAI and duplicate your API keys from the API part in your account in an effort to entry the fashions. Set up OpenAI in your laptop utilizing the command beneath:

- LangChain:LangChain is a framework designed to simplify the event of functions that leverage LLMs. It offers instruments and utilities to handle and streamline the assorted elements of working with LLMs, making constructing advanced and sturdy functions simpler.

Set up LangChain in your laptop utilizing the command beneath:

- Streamlit: Streamlit is a strong and easy-to-use Python library for creating net functions. Streamlit permits you to create interactive net functions utilizing Python alone. You do not want experience in net growth (HTML, CSS, JavaScript) to construct practical and visually interesting net apps.

It is useful for constructing machine studying and knowledge science apps, together with those who make the most of LLMs. Set up streamlit in your laptop utilizing the command beneath:

Code Alongside

With all of the required packages and libraries put in, it’s time to begin constructing the LLM utility. Create a requirement.txt within the root listing of your working listing and save the dependencies.

streamlit

openai

langchain

Create an app.py file and add the code beneath.

# Importing the mandatory modules from the Streamlit and LangChain packages

import streamlit as st

from langchain.llms import OpenAI

- Imports the Streamlit library, which is used to create interactive net functions.

- from langchain.llms import OpenAI imports the OpenAI class from the langchain.llms module, which is used to work together with OpenAI’s language fashions.

# Setting the title of the Streamlit utility

st.title('Easy LLM-App 🤖')

- st.title(‘Easy LLM-App 🤖’) units the title of the Streamlit net.

# Making a sidebar enter widget for the OpenAI API key, enter sort is password for safety

openai_api_key = st.sidebar.text_input('OpenAI API Key', sort="password")

- openai_api_key = st.sidebar.text_input(‘OpenAI API Key’, sort=”password”) creates a textual content enter widget within the sidebar for the person to enter their OpenAI API key. The enter sort is ready to ‘password’ to cover the entered textual content for safety.

# Defining a perform to generate a response utilizing the OpenAI language mannequin

def generate_response(input_text):

# Initializing the OpenAI language mannequin with a specified temperature and API key

llm = OpenAI(temperature=0.7, openai_api_key=openai_api_key)

# Displaying the generated response as an informational message within the Streamlit app

st.data(llm(input_text))

- def generate_response(input_text) defines a perform named generate_response that takes input_text as an argument.

- llm = OpenAI(temperature=0.7, openai_api_key=openai_api_key) initializes the OpenAI class with a temperature setting of 0.7 and the offered API key.

Temperature is a parameter used to regulate the randomness or creativity of the textual content generated by a language mannequin. It determines how a lot variability the mannequin introduces into its predictions.

- Low Temperature (0.0 – 0.5): This makes the mannequin extra deterministic and targeted.

- Medium Temperature (0.5 – 1.0): Offers a stability between randomness and determinism.

- Excessive Temperature (1.0 and above): Will increase the randomness of the output. Increased values make the mannequin extra inventive and various in its responses, however this may additionally result in much less coherence and extra nonsensical or off-topic outputs.

- st.data(llm(input_text)) calls the language mannequin with the offered input_text and shows the generated response as an informational message within the Streamlit app.

# Making a type within the Streamlit app for person enter

with st.type('my_form'):

# Including a textual content space for person enter

textual content = st.text_area('Enter textual content:', '')

# Including a submit button for the shape

submitted = st.form_submit_button('Submit')

# Displaying a warning if the entered API key doesn't begin with 'sk-'

if not openai_api_key.startswith('sk-'):

st.warning('Please enter your OpenAI API key!', icon='⚠')

# If the shape is submitted and the API secret is legitimate, generate a response

if submitted and openai_api_key.startswith('sk-'):

generate_response(textual content)

- with st.type(‘my_form’) creates a type container named my_form.

- textual content = st.text_area(‘Enter textual content:’, ”) provides a textual content space enter widget throughout the type for the person to enter textual content.

- submitted = st.form_submit_button(‘Submit’) provides a submit button to the shape.

- if not openai_api_key.startswith(‘sk-‘) checks if the entered API key doesn’t begin with sk-.

- st.warning(‘Please enter your OpenAI API key!’, icon=’⚠’) shows a warning message if the API secret is invalid.

- if submitted and openai_api_key.startswith(‘sk-‘) checks if the shape is submitted and the API secret is legitimate.

- generate_response(textual content) calls the generate_response perform with the entered textual content to generate and show the response.

Placing it collectively here’s what you’ve gotten:

# Importing the mandatory modules from the Streamlit and LangChain packages

import streamlit as st

from langchain.llms import OpenAI

# Setting the title of the Streamlit utility

st.title('Easy LLM-App 🤖')

# Making a sidebar enter widget for the OpenAI API key, enter sort is password for safety

openai_api_key = st.sidebar.text_input('OpenAI API Key', sort="password")

# Defining a perform to generate a response utilizing the OpenAI mannequin

def generate_response(input_text):

# Initializing the OpenAI mannequin with a specified temperature and API key

llm = OpenAI(temperature=0.7, openai_api_key=openai_api_key)

# Displaying the generated response as an informational message within the Streamlit app

st.data(llm(input_text))

# Making a type within the Streamlit app for person enter

with st.type('my_form'):

# Including a textual content space for person enter with a default immediate

textual content = st.text_area('Enter textual content:', '')

# Including a submit button for the shape

submitted = st.form_submit_button('Submit')

# Displaying a warning if the entered API key doesn't begin with 'sk-'

if not openai_api_key.startswith('sk-'):

st.warning('Please enter your OpenAI API key!', icon='⚠')

# If the shape is submitted and the API secret is legitimate, generate a response

if submitted and openai_api_key.startswith('sk-'):

generate_response(textual content)

Working the applying

The applying is prepared; it’s essential to execute the applying script utilizing the suitable command for the framework you are utilizing.

By working this code utilizing streamlit run app.py, you create an interactive net utility the place customers can enter prompts and obtain LLM-generated textual content responses.

Whenever you execute streamlit run app.py, the next occurs:

- Streamlit server begins: Streamlit begins a neighborhood net server in your machine, usually accessible at `http://localhost:8501` by default.

- Code execution: Streamlit reads and executes the code in `app.py,` rendering the app as outlined within the script.

- Internet interface: Your net browser mechanically opens (or you possibly can manually navigate) to the URL offered by Streamlit (normally http://localhost:8501), the place you possibly can work together along with your LLM app.

Deploying your LLM utility

Deploying an LLM app means making it accessible over the web so others can use and check it with out requiring entry to your native laptop. That is vital for collaboration, person suggestions, and real-world testing, guaranteeing the app performs nicely in various environments.

To deploy the app to the Streamlit Cloud, comply with these steps:

- Create a GitHub repository to your app. Make certain your repository consists of two recordsdata: app.py and requirements.txt

- Go to Streamlit Neighborhood Cloud, click on the “New app” button out of your workspace, and specify the repository, department, and fundamental file path.

- Click on the Deploy button, and your LLM utility will now be deployed to Streamlit Neighborhood Cloud and could be accessed globally.

Conclusion

Congratulations! You have taken your first steps in constructing and deploying a LLM utility with Python. Ranging from understanding the stipulations, putting in crucial libraries, and writing the core utility code, you’ve gotten now created a practical AI private assistant. By utilizing Streamlit, you have made your app interactive and simple to make use of, and by deploying it to the Streamlit Neighborhood Cloud, you have made it accessible to customers worldwide.

With the talents you have realized on this information, you possibly can dive deeper into LLMs and AI, exploring extra superior options and constructing much more subtle functions. Preserve experimenting, studying, and sharing your data with the neighborhood. The probabilities with LLMs are huge, and your journey has simply begun. Completely satisfied coding!

Shittu Olumide is a software program engineer and technical author captivated with leveraging cutting-edge applied sciences to craft compelling narratives, with a eager eye for element and a knack for simplifying advanced ideas. You can too discover Shittu on Twitter.