Whereas everybody’s been buzzing about AI brokers and automation, AMD and Johns Hopkins College have been engaged on bettering how people and AI collaborate in analysis. Their new open-source framework, Agent Laboratory, is a whole reimagining of how scientific analysis may be accelerated by way of human-AI teamwork.

After taking a look at quite a few AI analysis frameworks, Agent Laboratory stands out for its sensible strategy. As a substitute of attempting to exchange human researchers (like many present options), it focuses on supercharging their capabilities by dealing with the time-consuming facets of analysis whereas retaining people within the driver’s seat.

The core innovation right here is straightforward however highly effective: Reasonably than pursuing absolutely autonomous analysis (which regularly results in questionable outcomes), Agent Laboratory creates a digital lab the place a number of specialised AI brokers work collectively, every dealing with completely different facets of the analysis course of whereas staying anchored to human steering.

Breaking Down the Digital Lab

Consider Agent Laboratory as a well-orchestrated analysis workforce, however with AI brokers taking part in specialised roles. Similar to an actual analysis lab, every agent has particular duties and experience:

- A PhD agent tackles literature evaluations and analysis planning

- Postdoc brokers assist refine experimental approaches

- ML Engineer brokers deal with the technical implementation

- Professor brokers consider and rating analysis outputs

What makes this method notably attention-grabbing is its workflow. Not like conventional AI instruments that function in isolation, Agent Laboratory creates a collaborative setting the place these brokers work together and construct upon one another’s work.

The method follows a pure analysis development:

- Literature Overview: The PhD agent scours tutorial papers utilizing the arXiv API, gathering and organizing related analysis

- Plan Formulation: PhD and postdoc brokers workforce as much as create detailed analysis plans

- Implementation: ML Engineer brokers write and take a look at code

- Evaluation & Documentation: The workforce works collectively to interpret outcomes and generate complete reviews

However this is the place it will get actually sensible: The framework is compute-flexible, that means researchers can allocate assets primarily based on their entry to computing energy and funds constraints. This makes it a software designed for real-world analysis environments.

Schmidgall et al.

The Human Issue: The place AI Meets Experience

Whereas Agent Laboratory packs spectacular automation capabilities, the true magic occurs in what they name “co-pilot mode.” On this setup, researchers can present suggestions at every stage of the method, creating a real collaboration between human experience and AI help.

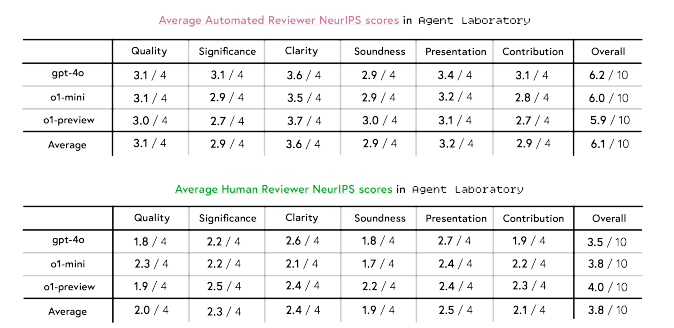

The co-pilot suggestions information reveals some compelling insights. Within the autonomous mode, Agent Laboratory-generated papers scored a mean of three.8/10 in human evaluations. However when researchers engaged in co-pilot mode, these scores jumped to 4.38/10. What is especially attention-grabbing is the place these enhancements confirmed up – papers scored considerably larger in readability (+0.23) and presentation (+0.33).

However right here is the fact examine: even with human involvement, these papers nonetheless scored about 1.45 factors under the common accepted NeurIPS paper (which sits at 5.85). This isn’t a failure, however it’s a essential studying about how AI and human experience want to enrich one another.

The analysis revealed one thing else fascinating: AI reviewers persistently rated papers about 2.3 factors larger than human reviewers. This hole highlights why human oversight stays essential in analysis analysis.

Schmidgall et al.

Breaking Down the Numbers

What actually issues in a analysis setting? The associated fee and efficiency. Agent Laboratory’s strategy to mannequin comparability reveals some stunning effectivity beneficial properties on this regard.

GPT-4o emerged because the pace champion, finishing your entire workflow in simply 1,165.4 seconds – that is 3.2x quicker than o1-mini and 5.3x quicker than o1-preview. However what’s much more necessary is that it solely prices $2.33 per paper. In comparison with earlier autonomous analysis strategies that price round $15, we’re taking a look at an 84% price discount.

mannequin efficiency:

- o1-preview scored highest in usefulness and readability

- o1-mini achieved one of the best experimental high quality scores

- GPT-4o lagged in metrics however led in cost-efficiency

The actual-world implications listed here are vital.

Researchers can now select their strategy primarily based on their particular wants:

- Want fast prototyping? GPT-4o provides pace and price effectivity

- Prioritizing experimental high quality? o1-mini is perhaps your greatest wager

- On the lookout for essentially the most polished output? o1-preview exhibits promise

This flexibility means analysis groups can adapt the framework to their assets and necessities, reasonably than being locked right into a one-size-fits-all answer.

A New Chapter in Analysis

After trying into Agent Laboratory’s capabilities and outcomes, I’m satisfied that we’re taking a look at a major shift in how analysis will likely be performed. However it’s not the narrative of alternative that always dominates headlines – it’s one thing much more nuanced and highly effective.

Whereas Agent Laboratory’s papers usually are not but hitting high convention requirements on their very own, they’re creating a brand new paradigm for analysis acceleration. Consider it like having a workforce of AI analysis assistants who by no means sleep, every specializing in numerous facets of the scientific course of.

The implications for researchers are profound:

- Time spent on literature evaluations and primary coding might be redirected to inventive ideation

- Analysis concepts which may have been shelved as a result of useful resource constraints turn into viable

- The power to quickly prototype and take a look at hypotheses may result in quicker breakthroughs

Present limitations, just like the hole between AI and human assessment scores, are alternatives. Every iteration of those methods brings us nearer to extra refined analysis collaboration between people and AI.

Wanting forward, I see three key developments that would reshape scientific discovery:

- Extra refined human-AI collaboration patterns will emerge as researchers be taught to leverage these instruments successfully

- The associated fee and time financial savings may democratize analysis, permitting smaller labs and establishments to pursue extra formidable initiatives

- The fast prototyping capabilities may result in extra experimental approaches in analysis

The important thing to maximizing this potential? Understanding that Agent Laboratory and comparable frameworks are instruments for amplification, not automation. The way forward for analysis is not about selecting between human experience and AI capabilities – it is about discovering modern methods to mix them.