Picture created by Creator

Introduction to RAG

Within the consistently evolving world of language fashions, one steadfast methodology of explicit be aware is Retrieval Augmented Technology (RAG), a process incorporating components of Info Retrieval (IR) throughout the framework of a text-generation language mannequin to be able to generate human-like textual content with the objective of being extra helpful and correct than that which might be generated by the default language mannequin alone. We’ll introduce the elementary ideas of RAG on this publish, with an eye fixed towards constructing some RAG techniques in subsequent posts.

RAG Overview

We create language fashions utilizing huge, generic datasets that aren’t tailor-made to your personal private or custom-made knowledge. To ontend with this actuality, RAG can mix your explicit knowledge with the present “knowledge” of an language mannequin. To facilitate this, what should be completed, and what RAG does, is to index your knowledge to make it searchable. When a search made up of your knowledge is executed, the related and necessary data is extracted from the listed knowledge, and can be utilized inside a question in opposition to a language mannequin to return a related and helpful response made by the mannequin. Any AI engineer, knowledge scientist, or developer constructing chatbots, trendy data retrieval techniques, or different kinds of private assistants, an understanding of RAG, and the information of the right way to leverage your personal knowledge, is vitally necessary.

Merely put, RAG is a novel method that enriches language fashions with enter retrieval performance, which reinforces language fashions by incorporating IR mechanisms into the technology course of, mechanisms that may personalize (increase) the mannequin’s inherent “knowledge” used for generative functions.

To summarize, RAG includes the next excessive stage steps:

- Retrieve data out of your custom-made knowledge sources

- Add this knowledge to your immediate as extra context

- Have the LLM generate a response based mostly on the augmented immediate

RAG supplies these benefits over the choice of mannequin fine-tuning:

- No coaching happens with RAG, so there is no such thing as a fine-tuning value or time

- Personalized knowledge is as recent as you make it, and so the mannequin can successfully stay updated

- The particular custom-made knowledge paperwork might be cited throughout (or following) the method, and so the system is far more verifiable and reliable

A Nearer Look

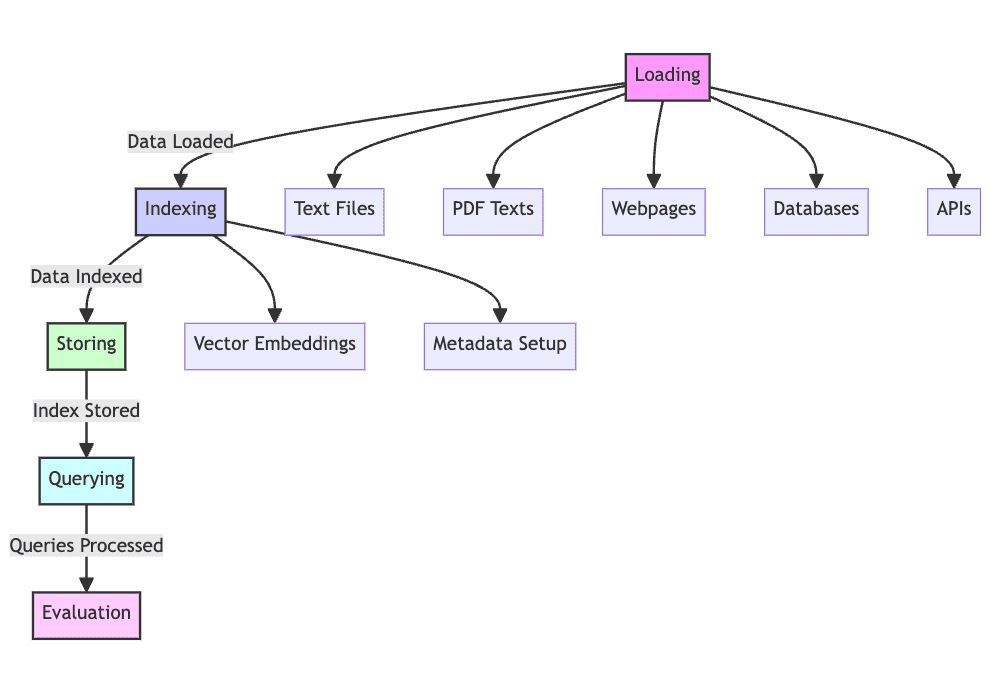

Upon a extra detailed examination, we are able to say {that a} RAG system will progress by 5 phases of operation.

1. Load: Gathering the uncooked textual content knowledge — from textual content recordsdata, PDFs, internet pages, databases, and extra — is the primary of many steps, placing the textual content knowledge into the processing pipeline, making this a needed step within the course of. With out loading of knowledge, RAG merely can’t operate.

2. Index: The information you now have should be structured and maintained for retrieval, looking out, and querying. Language fashions will use vector embeddings created from the content material to supply numerical representations of the information, in addition to using explicit metadata to permit for profitable search outcomes.

3. Retailer: Following its creation, the index should be saved alongside the metadata, guaranteeing this step doesn’t have to be repeated frequently, permitting for simpler RAG system scaling.

4. Question: With this index in place, the content material might be traversed utilizing the indexer and language mannequin to course of the dataset in response to varied queries.

5. Consider: Assessing efficiency versus different attainable generative steps is beneficial, whether or not when altering present processes or when testing the inherent latency and accuracy of techniques of this nature.

Picture created by Creator

A Brief Instance

Think about the next easy RAG implementation. Think about that it is a system created to discipline buyer enquiries a few fictitious on-line store.

1. Loading: Content material will spring from product documentation, consumer critiques, and buyer enter, saved in a number of codecs comparable to message boards, databases, and APIs.

2. Indexing: You’ll produce vector embeddings for product documentation and consumer critiques, and so on., alongside the indexing of metadata assigned to every knowledge level, such because the product class or buyer ranking.

3. Storing: The index thus developed can be saved in a vector retailer, a specialised database for the storage and optimum retreival of vectors, which is what embeddings are saved as.

4. Querying: When a buyer question arrives, a vector retailer databases lookup can be completed based mostly on the query textual content, and language fashions then employed to generate responses through the use of the origins of this precursor knowledge as context.

5. Analysis: System efficiency can be evaluated by evaluating its efficiency to different choices, comparable to conventional language mannequin retreival, measuring metrics comparable to reply correctness, response latency, and total consumer satisfaction, to make sure that the RAG system might be tweaked and honed to ship superior outcomes.

This instance walkthrough ought to offer you some sense of the methodology behind RAG and its use to be able to convey data retrieval capability upon a language mannequin.

Conclusion

Introducing retrieval augmented technology, which mixes textual content technology with data retrieval to be able to enhance accuracy and contextual consistency of language mannequin output, was the topic of this text. The tactic permits the extraction and augmentation of knowledge saved in listed sources to be integrated into the generated output of language fashions. This RAG system can present improved worth over mere fine-tuning of language mannequin.

The subsequent steps of our RAG journey will encompass studying the instruments of the commerce to be able to implement some RAG techniques of our personal. We’ll first concentrate on using instruments from LlamaIndex comparable to knowledge connectors, engines, and software connectors to ease the mixing of RAG and its scaling. However we save this for the following article.

In forthcoming initiatives we are going to assemble complicated RAG techniques and check out potential makes use of and enhancements to RAG expertise. The hope is to disclose many new potentialities within the realm of synthetic intelligence, and utilizing these numerous knowledge sources to construct extra clever and contextualized techniques.

Matthew Mayo (@mattmayo13) holds a Grasp’s diploma in pc science and a graduate diploma in knowledge mining. As Managing Editor, Matthew goals to make complicated knowledge science ideas accessible. His skilled pursuits embrace pure language processing, machine studying algorithms, and exploring rising AI. He’s pushed by a mission to democratize information within the knowledge science neighborhood. Matthew has been coding since he was 6 years outdated.